IJNSA 02

A Proposed Model for Dimensionality Reduction to Improve the Classification Capability of Intrusion Protection Systems

Hajar Elkassabi, Mohammed Ashour and Fayez Zakit

Department of Electronics & Communication Faculty of Engineering,

Mansoura University, Mansoura, Egypt

ABSTRACT

Over the past few years, intrusion protection systems have drawn a mature research area in the field of computer networks. The problem of excessive features has a significant impact on ntrusion detection performance. The use of machine learning algorithms in many previous researches has been used to identify network traffic, harmful or normal. Therefore, to obtain the

accuracy, we must reduce the dimensionality of the data used. A new model design based on a combination of feature selection and machine learning algorithms is proposed in this paper. This model depends on selected genes from every feature to increase the accuracy of intrusion detection systems. We selected from features content only ones which impact in attack detection. The performance has been evaluated based on a comparison of several known algorithms. The NSL-KDD dataset is used for examining classification. The proposed model outperformed the other learning approaches with accuracy 98.8 %.

KEYWORDS

NSL-KDD, Machine Learning, Intrusion Detection Systems, Classification, Feature Selection.

1.INTRODUCTION

Securing the network against all kinds of threats is an essential part of system security management. When the risks are increasingly increasing, safety systems need to be built to make them smarter than ever before. Regular security measures such as firewalls and antivirus cannot stop the growing number of complex attacks which take place over a network connection to the Internet. An additional safety layer was introduced as a solution to improve network security by the protection levels using intrusion protection systems (IPS). These can be viewed as additional protection measures focused on a framework of intrusion detection to avoid malicious attacks [1]. Through references, there were two main methods for detecting intrusions, one based on anomaly and the other based on signature [2]. In the first technique, the intrusion protection system searches for the data type outside the behaviour of the normal data type. When it finds this type of data, the attack protection system treats it as a potential attack. Anomalies in the data are detected by studying confirmed statistical behaviour. So, the difference from the natural flow is detected as an anomaly. Thus, it can express a possible intrusion within the network. One of the main advantages of this method is to contribute to identifying unknown attacks. This method can also detect data anomalies by detecting attack accurately through this mechanism with low false positives and negative warnings. One of the disadvantages of methods based on the detection of anomalies in the data is that its performance is affected negatively due to regularly changes that occur in the network, so the normal traffic profile should be updated from time to time for avoiding this problem. On the other hand, signature-based detection, which can also be called abuse-based detection, is used to search between a list of signatures or interference patterns to detect malicious data. This type of detection works in addition to a regular update of its database. When an attack occurs, the signatures of these attacks are created. Signing known attacks helps detect future attacks. An advantage of these techniques is to analyse and detect known attacks in an accurate and effective manner that generate low false alarm. The problem with the existing signature-based methods is that zero-day attacks cannot be detected [3].

The method for detecting anomalies in the data set depends mainly on the appropriate choice of features or dimensionality. It is essential that appropriately chosen features or dimensions maintain accuracy of disclosure while performing calculations quickly. Dimensionality reduction is an effective method used to improve the overall performance of the intrusion prevention systems because this method reduces the number of features used to detect the intrusion to the lowest possible value. If the excluded features are ineffective, this will significantly improve the speed of implementation of the anomaly detection in the data set. It is essential that this increase in detection speed does not significantly affect detection accuracy for data anomalies. On the other hand, failure to specify the correct dimensionality for the data set means excluding important characteristics will reduce the operating speed and detection accuracy [4].

One of the suggested methods in research is machine learning to establish systems to detect infiltration into the computer network [5]. Many references have been pointed to the effectiveness of machine learning techniques for improving network classification. For intrusion detection-based machine learning techniques it is not advisable to use all the features in the data set. Because the application of all features adds a burden to the methods of calculations used. On the other hand, choosing the right features improves efficiency and reduces the time spent on learning. The relevant function is then used for further processing after this process [6]. The measurement of the performance of anomaly detection systems in the data must be based on use of the standard data set. The NDL-KDD Dataset was a popular data series on intrusion protection systems to test the validity of the methods proposed in this form of study. Many studies in this research area were conducted using the NSL-KDD data set [7].

One of the suggested methods in research is machine learning to establish systems to detect infiltration into the computer network [5]. Many references have been pointed to the effectiveness of machine learning techniques for improving network classification. For intrusion detection-based machine learning techniques it is not advisable to use all the features in the data set. Because the application of all features adds a burden to the methods of calculations used. On the other hand, choosing the right features improves efficiency and reduces the time spent on learning. The relevant function is then used for further processing after this process [6]. The measurement of the performance of anomaly detection systems in the data must be based on use of the standard data set. The NDL-KDD Dataset was a popular data series on intrusion protection systems to test the validity of the methods proposed in this form of study. Many studies in this research area were conducted using the NSL-KDD data set [7].

2.RELATED WORKS

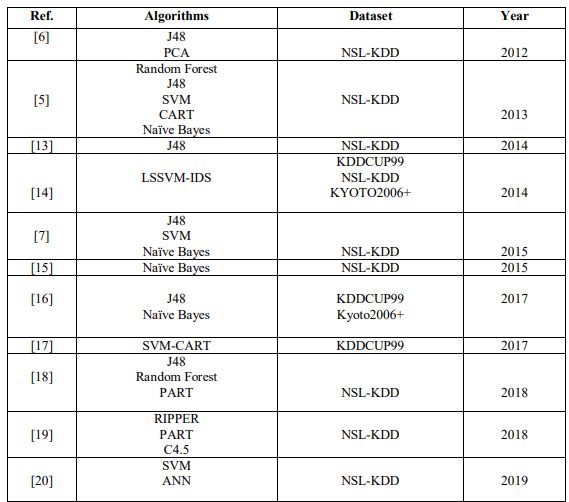

Over the last few decades, researchers carried out studies using the NSL-KDD dataset [8,9]. These studies concentrated on training and testing several machine learning algorithms as shown in Table (1).

Sabhani and Serpen [9] utilized decision trees (DT) algorithm and got high accuracy, however this technique did not do well with R2L and U2R attacks as they contain new attack types. Dhanabal and Shantharajah [7] applied for classification of SVM, J48 and Naïve Bayes algorithms. Application of correlation feature selection increases the accuracy and reduces detection time.

Shrivastava, Sondhi and Ahirwar [10] presented the IDS framework which improves the classification performance based on machine learning algorithms. Deshmukh, Ghorpade and Padiya [11] focused on increasing accuracy by using classifiers such as Naïve Bayes. Several pre-processing steps have been implemented on the NSL-KDD dataset as Discretization and Feature selection.

The performance of the NSL-KDD dataset was evaluated by Ingre and Yadav [12] using Artificial Neural Networks. Results applied based on several performance measures such as false positive rate, accuracy and detection rate and better accuracy was found. The proposed model achieved a higher detection rate compared with existing models.

Table 1. Overview of previous machine learning techniques for intrusion detection

3.PROBLEM STATEMENT

Access to the information via Internet, files are exchanged over a network, emails are sent and received with attachments and databases are now part of the daily routine of many people and businesses. Nearly all electronic communication is subject to the task of effectively managing the risks of today’s cyber world to protect itself from malware attacks and hacking threats. The hackers use Security Vulnerabilities in computer networks for this mischievous assault and intrusion threats. A firewall may be used as a preventative measure. Yet only minimal security is

available from firewalls. Usually, a single firewall is mounted before a server to defend against external attacks. In the case of hackers who use fake packages that include a malicious program, the protection mechanism is compromised when the firewall is tricked by the mispackages. In addition, the firewall is useless if the hacking is performed inside the network by an insider. A main element of device security management is to protect the network against all sorts of attacks. Because the threats are growing exponentially, security systems must be designed to make them smarter than ever. The increasing number of complex attacks that take place over a network connection to the Internet cannot be stopped by regular security measures such as firewalls and antivirus. An additional layer of security has been proposed as a solution to enhance network security by increasing layers of protection using intrusion protection systems (IPS). They can be considered as additional safety measures that based on an intrusion detection system to prevent intentional attack [1].

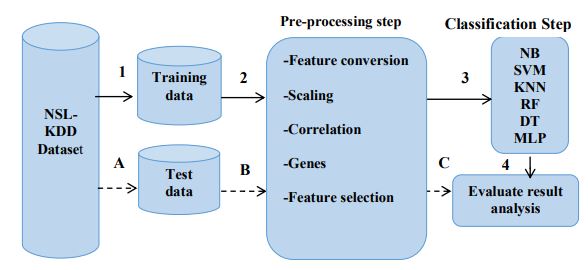

4.PROPOSED MODEL

The proposed system is a combination of feature selection and machine learning algorithms. The process steps are shown in Figure 1. In this paper we applied a new feature selection method that depends on dividing the contents in every feature to (Genes) using the NSL-KDD dataset. The results are compared before and after deleting unimportant genes in every feature. For the simulation we used python (3.7.3) and Weka tool. They have various machine learning algorithms and tools for data pre-processing, Clustering, Classification, Visualization and Data analysis. The experimental steps are

- Import data set “train & test”

- Pre-process step (Data conversion, Data correlation, Data scaling).

- Run the classifier.

- Evaluate results analysis & Compare the results

We will import NSL-KDD train data for pre-processing steps then feed it to the classifier to complete the learning process. The test data file will be pre-processed also with the same preprocessing steps. After that we will feed the system with these hidden data (test-data) for validating the learning rate of every classifier, therefore the classifier accuracy is calculated. From pre-processing step, we obtain a 16-feature subset based on a higher accuracy than other

Figure 1. Our Proposed Model for Feature Selection & Classification

subsets. we discovered that not all features contents are important in attack detection. We named the feature content {Gene}. We will eliminate unimportant genes in every these 16-features. We choose only one effective gene in every feature depending on its frequency with attacks.

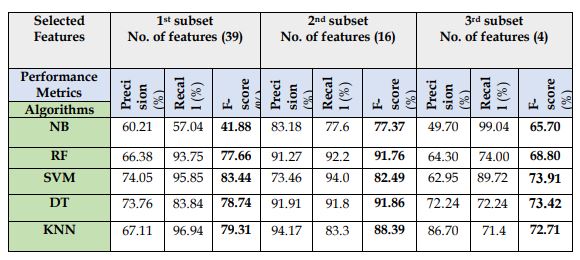

For example: Attribute 9 has values (Genes) {0,1,2,3}. The attack’s symbols (A1 to A23) are represented in table (8). The new selection mechanism has been described in table (2) and figure (2), we notice that gene (0) has higher detection rate with 23 types of attacks which NSL-KDD contains, so we will choose it from this feature. We will do that for all feature in our subset. The experiments show that this model has higher accuracy compared with one which contains all feature’s contents.

Table 1. Distribution of attacks with genes in feature (9)

Figure 2. Genes Distribution in feature (9)

5.RESEARCH METHODOLOGY

A network is formed by packets that start and end at any time as data is transmitted from one source IP address to another target IP address under a certain protocol via transmission control protocol (TCP) systems. Every network is classified as regular or as an attack of exactly one specific type of attack. NSL-KDD Data Collection has been used in this paper, this dataset is a modified version of DARPA and KDD CUP99 managed by MIT Lincoln Labs. Nine weeks raw TCP dump data were obtained from Lincoln Labs for the local area network (LAN) pretending as a Typical US Air Force network [35]. The first seven weeks are data for the training set and the last two weeks is the test set. There are 42 variables in this dataset, one of which is the network condition, marked as an attack or normal. These research variables summarised into three categories as follows:

1) Essential features: all features collected from the TCP / IP are included in this group.

2) Traffic characteristics: this class describes the characteristics that are measured for a duration

3) Content features: we can evaluate functions like the number of failed logins attempts to recognize suspected behavior.

6.DATASET VISUALIZATION

(Network Security Laboratory Knowledge Discovery and Data Mining) NSL-KDD is extracted from the KDD dataset (the original version). The number of NSL-KDD features in each record is (42) while the last attribute explained the label or class. Each connection is labelled an attack type or normal [21]. The Total number of attacks presented in NSL-KDD are 39 attacks, each one of them is grouped in to four major classes:

- DOS: denial-of-service, which means preventing legitimate users from accessing a service.

- R2L: Remote-to-Local, which means accessing the victim machine by intruding into a remote machine.

- U2R: User-to-Root, that means a normal account has been used to login in a victim network and attempt to get root privilege.

- Probing: checking and scanning vulnerability on the victim machine for collecting data about it.

As appeared in Table (3,4) the distributions of NSL-KDD dataset files, The NSL-KDD contains two files (training and testing). Test file includes different attacks which do not exist in the training file, it is significant to be noted.

Table 3. List of attacks presented in NSL-KDD

Table 4. NSL-KDD files Distributions

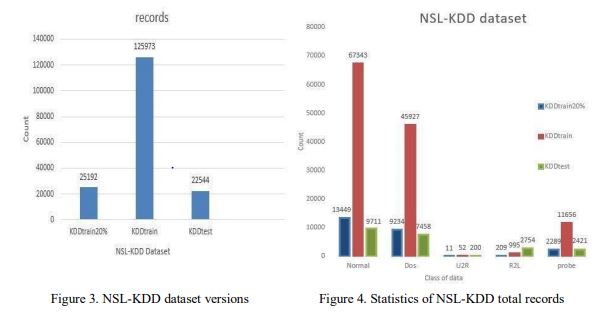

As it been clarified in figure (3&4), NSL-KDD dataset available in three versions:

a. KDDTrain+ with a total number of 125974 records.

b. KDDTrain+_20Percent which consists of 20% of the training data with 25192 records.

c. KDDTest+ with a total number of 22544 records.

Even though the NSL-KDD dataset had a few issues, it is an extremely successful dataset that can be utilized for research purposes [10], [22]. In addition, it is hard to acquire certifiable security datasets considering the idea of the security area and keeping in mind that there are other datasets.

7.SIMULATION TOOLS & SYSTEM CONFIGURATIONS

In feature selection step for obtaining (16-feature), we used Huffman coding by MATLAB. Huffman Coding is a lossless algorithm for data encryption [36]. The process underlying its system includes the sorting by frequency of numerical values. Using this code enable us to obtain frequencies between attacks and features for all instances in NSL-KDD.

The simulation tool used for the first and second experiments is python 3.7. Deep learning using multi-layer perceptron has been used in the third experiment using Waikato Environment For knowledge Analysis (Weka) version 3.8.3 by OS windows 10 enterprise Intel® Core™ i5-3230M CPU@ 2.60GH, (RAM) 6.00 GB.

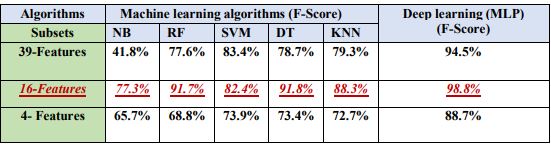

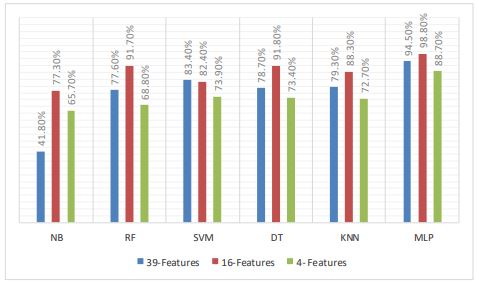

8.EXPERIMENTS AND RESULTS

In this paper we divided our work into three experiments. In the first one we used many subsets (39-feature, 16-feature, and 4-features) for training & testing and five machine learning algorithms for classification (NB, KNN, SVM, DT, RF) then compared between them. A new feature selection model for enhancing classification accuracy has been discussed in the second experiment. The simulation tool used for the first and second experiments is python 3.7. Deep learning using multi-layer perceptron has been used in the third experiment using Weka as a simulation tool.

8.1. Performance Metrics

The following performance metrics have been used in our work

● True [Positive (TP): Record is exposed as an attack.

● True Negative (TN): Record Correctly identified as normal.

●False Positive (FP): When a classifier detected a normal record as an attack.

● False Negative (FN): a detector identifies an attack as a normal instance.

● F-measure. It is obtained from the following equations

8.2. The First Experiment:

8.2.1. Pre-processing step

Pre-processing data is an important task for accuracy. This is because data is mostly noisy and sometimes has missing values, so feature selection or dimensionality reduction considered a major method of pre-processing which directly impact the accuracy of the model. Feature selection is the method of selecting some features out of the data and discarding the irrelevant ones [24]. NSL-KDD dataset contains training data which have 41 feature and class attribute that contain 23 type of attacks [7], after removing feature 20&21 because containing zeros we obtain 1st subset [39-feature]. We measure frequency between all features and 23 types of attacks, we found that features {9,11,13,15,21,22,23,24,27,28,29,30,31,37,40,41} have a highest effect in attack detection as shown in table (8), so these [16-feature] will be our 2nd subset. From [14],[13],[25],[26],[18],[27] we obtained a third subset which contains [4-features] [3,5,12,26] as common features in the previous researches.

Our pre-processing step has data visualization, data conversion & scaling and data correlation, we will do that for our three subsets.

A) Data visualization

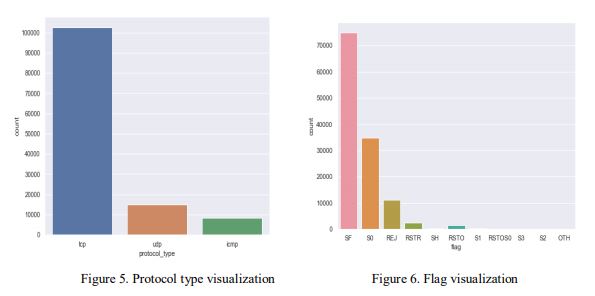

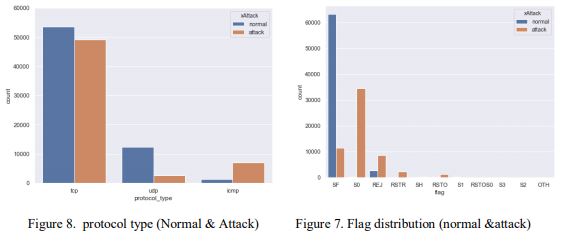

As shown in Figure (9) Xattack column in train data contains different types of attacks, we will modify it so that it will have only Two unique values (attack & normal), as clarified for train data in the following figures (5,6,7,8)

Figure 9. Visualization for XAttack column in train data

B) Data conversion & scaling

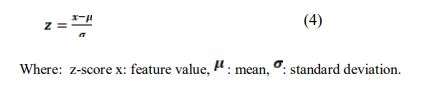

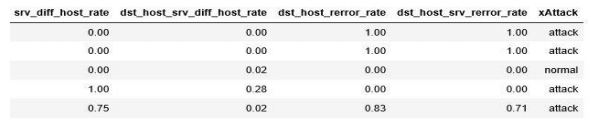

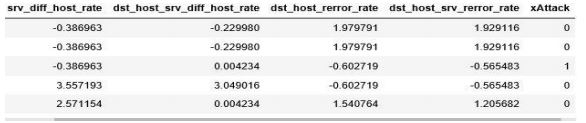

We display first five rows in train data As shown in Table (5) we notice that some features are umerical and the others categorical also the features have values differ a lot from each other so we have to convert all categorical features to numerical and scale down all data in the same range, the features will be scaled so that they will have the properties of a standard normal distribution with μ=0 and σ =1 to facilitate classification step and obtain precise results [23].

Table 5. Train Data Sample before Pre-processing

Table 6. Train Data Sample after Pre-processing.

Table (6) show that all data converted to numerical and are in the same range. We conducted the same steps in test data also to prepare it for validating.

C) Data Correlation

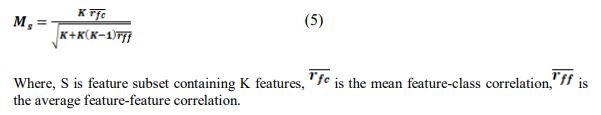

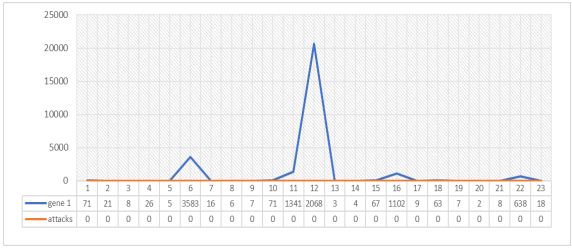

Correlation is considered a popular and effective technique for choosing the most related features in any dataset. It describes strength of association between features [22]. The following equation described the evaluation function.

Figure (10) display correlation between all 16 features with each other and class

Figure 10. Correlation coefficients between all 16 attributes

8.2.2. Classification

We used for classification step many machine learning classifiers. Naïve Bayes classifier considered a supervised machine learning algorithm works on the principle of conditional probability as given by the Bayes theorem [28]. Support vector machine is one of the most popular supervised machine learning algorithms, which can efficiently perform linear and nonlinear classification1 [29]. Decision tree learning and Random forest are predictive modelling approaches used in statistics, data mining and machine learning [30],[31]. The k-nearest neighbour (k-NN) algorithm is a non-parametric method proposed for classification and regression by Thomas Cover [32].

8.2.3. Results

Our typical procedure is first training the model using a dataset, once it is built the next step is to use a dataset for testing your model and It isbasically return the result, then those results will be compared with the truth to measure the accuracy of the model. They are several ways to perform these steps, the first way:

Use all available dataset for training and test on different dataset that means feed your data to the odel and test with different one. The second way is split available data set in to training and test sets. The last way is Cross validation that means a technique involves reserving a particular sample of dataset on which you don’t train the model, later you test the model on this sample before finalizing the model. Because of having a different kind of test and training data, we get good accuracy and the model will be able to deliver very good results.

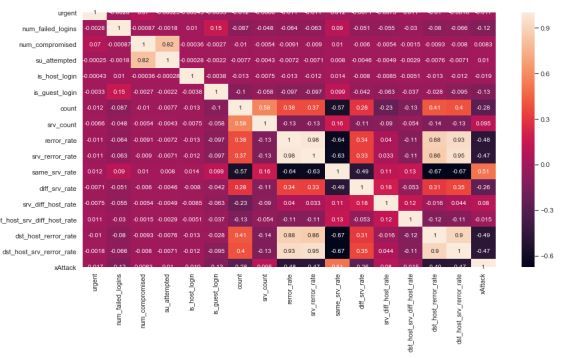

In the 1st experiment we used for training (train data) and a different dataset for testing (testdata). All pre-processing steps also conducted into the test data with same steps in our three subsets. The results are discussed in the following table.

Table 7. Difference between three subsets with machine learning algorithms

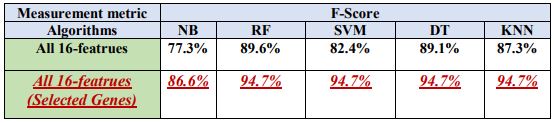

8.3. The Second Experiment

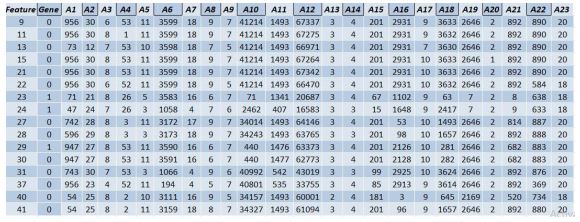

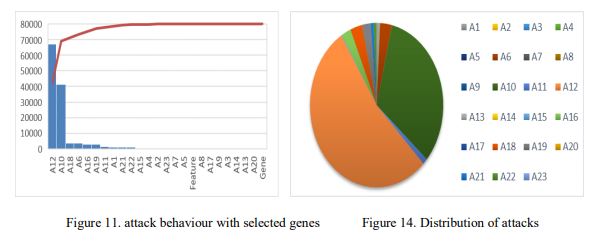

From previous experiment we found that [16-feature] {9,11,13,15,21,22,23,24,27,28,29,30,31,37,40,41} have a higher accuracy than other subsets, so it will be used in this experiment, we discovered that not all features content are important in attack detection. We named the feature content {Genes}. We will eliminate unimportant genes in every [16-feature]. As shown in table (8) and figure (10) we choose only one effective gene in every feature depending on its frequency with attacks.

Figure 12. Gene 1 Distribution in feature 23

Table 8. Attacks symbols

8.3.1. Data Visualization

Table 9. Frequency between attacks and 16 features

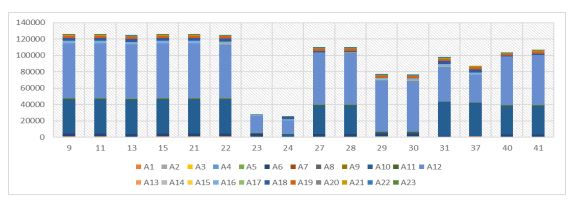

For example, feature number 23 which named (count) has 512 gene. We convert all 23 attack types to (A1,A2,…….A23) as shown in table (7), after obtaining frequency between these genes and attacks we found that only gene (1) in feature 23 has a higher attack detection. We will do that for all 16 features in our subset. The results are appeared in table (9). Figure (12) shows the distribution of gene 1 in feature23. Figures (11.13.14) display the Attacks behaviour with selected genes.

Figure 13. Distribution of 23 attacks in our feature set (16 features)

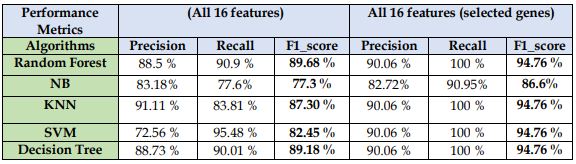

8.3.2. Results

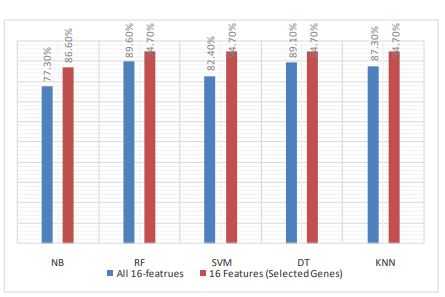

In the 2nd experiment we feed our model with training set and for testing, we split the training data into 70 % for training and 30 % for testing to measure the accuracy in a good way. We used same algorithms in classification as 1st experiment. The results show that selected genes have accurate results than those in all 16-features. That is confirmation that not all feature content is important in attack detection as shown in table 10.

Table 10. Difference between all 16-feature and selected genes

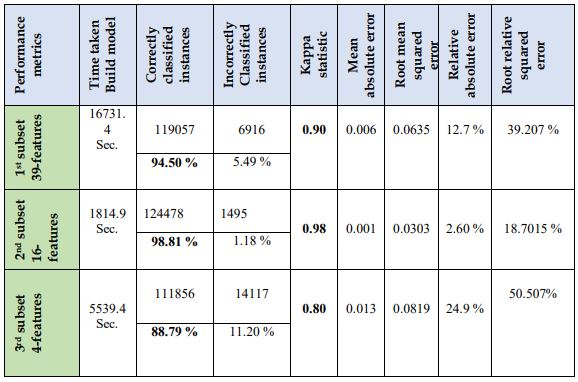

8.4. The Third Experiment

In this experiment deep learning has been used through classification step by multi-layer perceptron (MLP). We performed this experiment with our three subsets (39-Feature, 16-Feature, 4-Features) and differentiate between the results. MLP used for training a supervised learning method called back-propagation. MLP is distinguished from a linear perceptron by several layers and non-linear activation. Data that cannot be linearly separated can be distinguished by MLP [33]. A total number of instances in all subsets is 125973 instances.

8.4.1. Performance metrics

To evaluate the experiment performance, seven known statistical indices (as mostly used in academic studies) are used to help rank the output of the classification. The correctly classified instances mean the sum of TP and TN. Similarly, incorrectly classified instances mean the sum of FP and FN. The total number of correctly instances divided by a total number of instances gives the accuracy. The Kappa statistics detect how closely the machine-learning classification instances matched the data labelled as the basic truth to test for the accuracy of a random classifier determined by the predicted accuracy. This implies that a value greater than 0 is better for your classifier. The Mean absolute error (MAE) the amount of predictions used to measure the possible result. The Root mean square error (RMSE) measure values difference model of an estimator predict and the values observed. The relative squared error (RSE) & The root relative squared error (RRSE) Is relative to what a simple predictor would have been if used. This basic indicator is more precisely just the sum of the actual values.

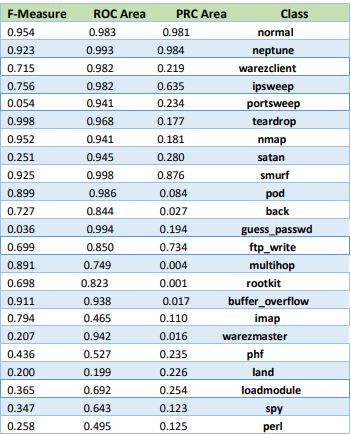

8.4.2. Results

Waikato Environment For knowledge Analysis (Weka) version 3.8.3 have been used as a simulation tool by OS windows 10 enterprise Intel® Core™ i5-3230M CPU@ 2.60GH, (RAM) 6.00 GB. We used for testing “Cross-Validation” with 5 folds, the.dataset.is divided into five parts of approximately. the. same size [34]. Tables (A, B, C) describe the MLP performance with 23 type of attacks which NSL-KDD dataset contains, by our three subsets (39, 16, 4 Features) in this order. The differences between our three subsets and the result of the third experiment are shown in table (11). Comparative discussion represented in table (12 &13) and Figure (17,18).

9.RESULTS DISCUSSION AND PERFORMANCE ANALYSIS

From our three experiment we have best and worst way. If we used all dataset features without feature selection techniques, we prefer using SVM algorithm which had a higher accuracy than other machine learning algorithms with (83.44%). If we use all dataset features with deep learning algorithm (MLP), we got better accuracy than using machine learning algorithms with (94.5%). If we used [16-feature] subset after applying features reduction techniques, we prefer using machine learning algorithms (RF) with accuracy (91.76%) and (DT) with accuracy (91.86%). When we selected genes from [16-feature] subset we get high accuracy than using all feature content with (94.76%) using machine learning algorithms. If we use [16-feature] subset with deep learning approaches, we got a higher accuracy (98.81%) with (MLP) technique. If we use [4-feature] subset with (MLP) deep learning technique we got higher accuracy (88.7%) than using machine learning algorithms with accuracy (73.9%). We notice that [16-feature] subset which has been chosen based on frequency between features and attacks has the high results in three experiments. Finally, we have bad precision if we use all the features in our dataset without feature selection. If we used 4-features also get bad accuracy because we do not make any feature selection methods, we just get it as a common feature from previous researches. When using selection methods with 16-attributes, we are more precise. Feature selection is considered a very important step before classification. Using Multi-layer perceptron deep learning technique got higher accuracy in all three experiments with all subsets.

Table 11. The performance of MLP technique in attack detection

Table 12. Comparison of F-score between all subsets

Figure 16. (16-features) F score with machine and deep learning

Table 13. Comparison of F-score between 16 features (before & after deleted genes)

Figure 17. (16-features before & after deleted genes)

10.CONCLUSION

Machine and Deep Learning algorithms have been used in this paper to improve the classifications of intrusion detection systems. We applied three experiments using NSLKDD dataset. This dataset is divided into three subsets using featuring reduction approaches. In the 1st experiment we used NB, SVM, KNN, RF and DT algorithms for classification we noticed that

the 16-feature subset had the highest results. We proposed in the 2nd experiment a model established on selected genes from every feature. when we eliminate unimportant genes from each feature, we will obtain a higher accuracy than using the all feature content. In the 3rd experiment we used Deep Learning Technique with Multilayer Perceptron (MLP) for classification. From these experiments we found that subset which has 16-feature has high accuracy and not all feature contents are important for attack detection. Our future work is to focus research on anew datasets as UNSW-NB-15 which contains up-to-date attacks. Using NS3 or opnet we can try our system in life attack scenario.

Table (c)

CONFLICTS OF INTEREST

The authors declare no conflict of interest.

REFERENCES:

[1] Zargar, G. R. “Category Based Intrusion Detection Using PCA”. International Journal of Information Security, 3, 259-271, October 2012.

[2] Das, A., Nguyen, D., Zambreno, J., Memik, G. and Choudhary, A. “An FPGA-Based Network Intrusion Detection Architecture, IEEE Transactions on Information Forensics and Security”, Vol. 3, No. 1, pp. 118-132, 2008.

[3] H.J. Liao, C.H.R. Lin, Y.C. Lin and K.Y. Tung, “Intrusion detection system: A comprehensive review.”, Journal of Network and Computer Applications, Vol.36, issue.1, pp. 16-24, 2013.

[4] Chou, T. S. Yen, K. K. and Luo, J. Network Intrusion Detection Design Using Feature Selection of Soft Computing Paradigms, International Journal of Computational Intelligence, Vol. 4, No. 3, pp. 196-208, 2008.

[5] S. Revathi, and A. Malathi, “A detailed analysis on NSL-KDD dataset using various machine learning techniques for intrusion detection.” International Journal of Engineering Research & Technology (IJERT) 2, no. 12, 1848-1853, 2013.

[6] A. Alazab, M. Hobbs, J. Abawajy and M. Alazab. “Using feature selection for intrusion detection system.” In 2012 international symposium on communications and information technologies (ISCIT), pp. 296-301. IEEE, 2012.

[7] L.Dhanabal and S.P. Shantharajah, ” A study on NSL-KDD dataset for intrusion detection system based on classification algorithms”, International Journal of Advanced Research in Computer and Communication Engineering, Vol. 4, Issue 6, pp.446-452, June 2015

[8] M. Tavallaee, N. Stakhanova and A. A. Ghorbani, “Toward credible evaluation of anomaly-based intrusion-detection methods”, IEEE Transactions on Systems, Man, and Cybernetics, Part C (Applications and Reviews), vol.40, issue 5, pp. 516-524 ,2010.

[9] M. Tavallaee, E. Bagheri, W. Lu, and A. A. Ghorbani, “A detailed analysis of the KDD CUP 99 data set”, 2009 IEEE symposium on computational intelligence for security and defense applications (pp.1-6). IEEE. 2009.

[10] A. Shrivastava, J.Sondhi and S. Ahirwar, “Cyber attack detection and classification based on machine learning technique using nsl kdd dataset”, Int. Reserach J. Eng. Appl. Sci., vol.5, issue2, pp.28-31, 2017.

[11] D. H. Deshmukh, T. Ghorpade and P. Padiya, “Improving classification using preprocessing and machine learning algorithms on NSL-KDD dataset”, 2015 International Conference on Communication, Information & Computing Technology (ICCICT) (pp. 1-6). IEEE, 2015.

[12] B. Ingre and A. Yadav,” Performance analysis of NSL-KDD dataset using ANN”, 2015 international conference on signal processing and communication engineering systems (pp. 92-96). IEEE. 2015.

[13] H.Chae and S.Choi,” Feature Selection for efficient Intrusion Detection using Attribute Ratio”, INTERNATIONAL JOURNAL OF COMPUTERS AND COMMUNICATIONS, Vol. 8, pp.134-139, 2014.

[14] M.Ambusaidi, X.He, P.Nanda and Z.Tan, “Building an Intrusion Detection System Using a FilterBased Feature Selection Algorithm,” IEEE Transactions on Computers, vol. 65, no.10, pp. 2986-2998, 2016.

[15] Y.Wahba, E.ElSalamouny, and G. ElTaweel, “Improving the performance of multi-class intrusion detection systems using feature reduction” , IJCSI International Journal of Computer Science Issues , Vol. 12, Issue 3, pp.255-262, May 2015.

[16] D.A.Kumar and S.R.Venugopalan, “The effect of normalization on intrusion detection classifiers (Naïve Bayes and J48)”, International Journal on Future Revolution in Computer Science & Communication Engineering ,Vol 3, Issue: 7, pp.60-64, July 2017.

[17] A.Puri and N.Sharma, “A NOVEL TECHNIQUE FOR INTRUSION DETECTION SYSTEM FOR NETWORK SECURITY USING HYBRID SVM-CART.” International Journal of Engineering Development and Research, Vol. 5, Issue.2, pp.155-161, 2017.

[18] M. Abdullah, A. Alshannaq, A. Balamash and S. Almabdy. “Enhanced intrusion detection system using feature selection method and ensemble learning algorithms” International Journal of Computer Science and Information Security (IJCSIS), Vol. 16, No. 2, pp.48-55, 2018.

[19] L. Gnanaprasanambikai and N.Munusamy, “Data Pre-Processing and Classification for Traffic Anomaly Intrusion Detection Using NSLKDD Dataset. Cybernetics and Information Technologies”, CYBERNETICS AND INFORMATION TECHNOLOGIES Vol.18, pp.111-119, 2018.

[20] K. A.Taher, B. M. Y .Jisan and M. M. Rahman,”Network intrusion detection using supervised achine learning technique with feature selection”, 2019 International Conference on Robotics, Electrical and Signal Processing Techniques (ICREST), pp. 643-646, 2019

[21] S. Aljawarneh, M. Aldwairi, and M. B. Yassein, “Anomaly-based intrusion detection system through feature selection analysis and building hybrid efficient model”, Journal of Computational Science, 2018 – Elsevier, vol.25, pp.152-160, 2018.

[22] M. A. Hall, “Correlation-based feature selection for machine learning”, 1999.

[23] Patro, S., and Kishore Kumar Sahu. “Normalization: A preprocessing stage.” arXiv preprint arXiv:1503.06462 (2015)

[24] A. Alazab, M. Hobbs, J. Abawajy and M. Alazab. “Using feature selection for intrusion detection system.” In 2012 international symposium on communications and information technologies (ISCIT), pp. 296-301. IEEE, 2012.

[25] Tesfahun, Abebe, and D. Lalitha Bhaskari. “Effective hybrid intrusion detection system: A layered approach.” International Journal of Computer Network and Information Security 7, no. 3 (2015): 35.

[26] Harb, Hany M., Afaf A. Zaghrot, Mohamed A. Gomaa, and Abeer S. Desuky. “Selecting optimal subset of features for intrusion detection systems.” (2011).

[27] Latah, Majd, and Levent Toker. “Towards an efficient anomaly-based intrusion detection for software-defined networks.” IET networks 7, no. 6 (2018): 453-459 International Journal of Network Security & Its Applications (IJNSA) Vol. 12, No.4, July 2020 37

[28] Maron, Melvin Earl. “Automatic indexing: an experimental inquiry.” Journal of the ACM (JACM) 8, no. 3 (1961): 404-417.

[29] Cortes, Corinna, and Vladimir Vapnik. “Support-vector networks.” Machine learning 20, no. 3 (1995): 273-297.

[30] Wu, Xindong, Vipin Kumar, J. Ross Quinlan, Joydeep Ghosh, Qiang Yang, Hiroshi Motoda, Geoffrey J. McLachlan et al. “Top 10 algorithms in data mining.” Knowledge and information systems 14, no. 1 (2008): 1-37.

[31] Piryonesi, S. Madeh, and Tamer E. El-Diraby. “Data Analytics in Asset Management: Cost-Effective Prediction of the Pavement Condition Index.” Journal of Infrastructure Systems 26, no. 1 (2020): 04019036.

[32] Altman, Naomi S. “An introduction to kernel and nearest-neighbor nonparametric regression.” The American Statistician 46, no. 3 (1992): 175-185.

[33] Rosenblatt, Frank. Principles of neurodynamics. perceptrons and the theory of brain mechanisms. No. VG-1196-G-8. Cornell Aeronautical Lab Inc Buffalo NY, 1961.

[34] Stone, Mervyn. “Cross‐validatory choice and assessment of statistical predictions.” Journal of the Royal Statistical Society: Series B (Methodological) 36, no. 2 (1974): 111-133.

[35] Tavallaee, et al. “A Detailed Analysis of the KDD CUP 99 Data Set.” 2009 IEEE Symposium on Computational Intelligence for Security and Defense Applications, 2009.

[36] Huffman, David A. “A method for the construction of minimum-redundancy codes.” Proceedings of the IRE 40, no. 9 (1952): 1098-1101.

AUTHORS

HAJAR M. ELKASSABI is a researcher at Faculty of Engineering, Mansoura University, Egypt. She Received Th B.S.C. degree in Comm. Engineering from Mansoura University, Egypt in 2009. She received Diploma degree in electrical engineering from Mansoura University Egypt in 2012. She received Diploma degree from Ministry of Communication and Information Technology (MCIT) in 2014 track networking. Her current research interests include network security, Cloud Computing, and Internet routing

MOHAMMED M. ASHOUR is an assistant professor at the faculty of engineering Mansoura University, Egypt. He received B.Sc. from Mansoura University Egypt in He received an M.Sc. degree from Mansoura University, Egypt in 1996. He receives a Ph.D. degree from Mansoura University, Egypt 2005. Worked as Lecturer Assistant at Mansoura University, Egypt from 1997, from 2005, an Assistant Professor. Fields of interest: Network Modelling and Security, Wireless Communication, and Digital Signal Processing.

FAYEZ W. ZAKI is a professor at the Faculty of Engineering, Mansoura University. He received the B. Sc. in Communication Eng. from Menofia University Egypt 1969, M. Sc. Communication Eng. from Helwan University Egypt 1975, and Ph.D. from Liverpool University 1982. Worked as a demonstrator at Mansoura University, Egypt from 1969, Lecture assistant from 1975, a lecturer from 1982, Associate Prof. from 1988, and Prof. from 1994. Head of Electronics and Communication Engineering Department Faculty of Engineering, Mansoura University from 2002 till 2005. He supervised several MSc and Ph.D. thesis. He has published several papers in refereed journals and international conferences. He is now a member of the professorship promotion committee in Egypt.